Introduction

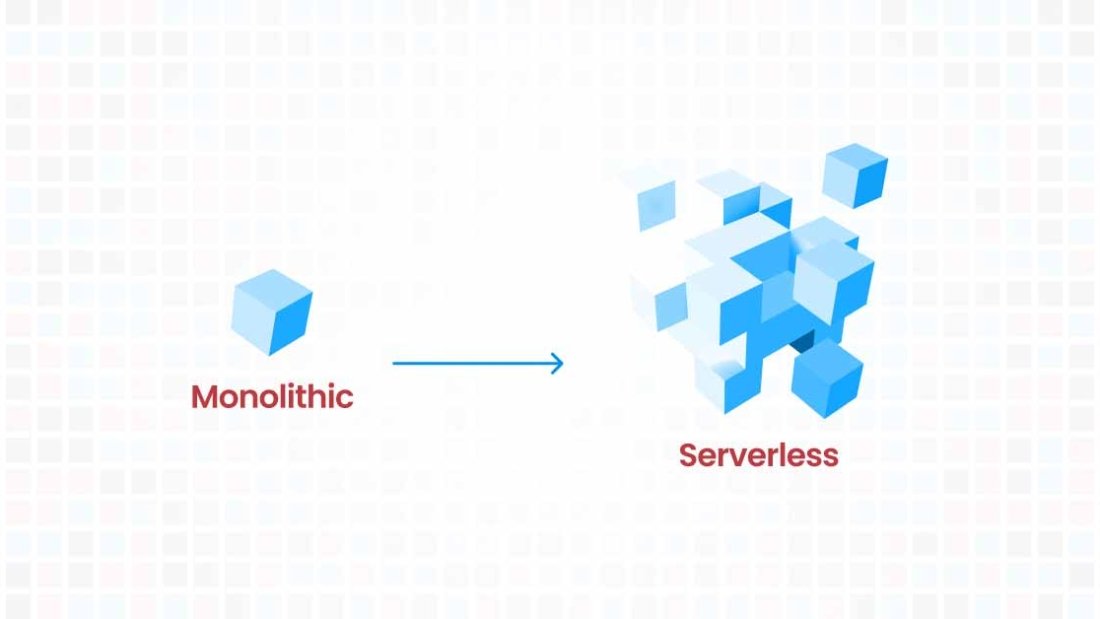

In a move that has sent ripples across the tech industry, HashiCorp, recently announced a significant shift in its licensing model for Terraform, a popular open-source infrastructure as code (IaC) tool. After approximately nine years under the Mozilla Public License v2 (MPL v2), Terraform will now operate under the non-open source Business Source License (BSL) v1.1. This unexpected transition raises important questions and considerations for companies leveraging Terraform, especially those using AWS.

Terraform has been a staple tool for many developers, enabling them to define and provide data center infrastructure using a declarative configuration language. Its versatility across various cloud providers made it a go-to choice for many. However, with this licensing change, the way organizations use Terraform might undergo a considerable transformation.

Implications for AWS Users and the Shift to Cloud Development Kit (CDK)

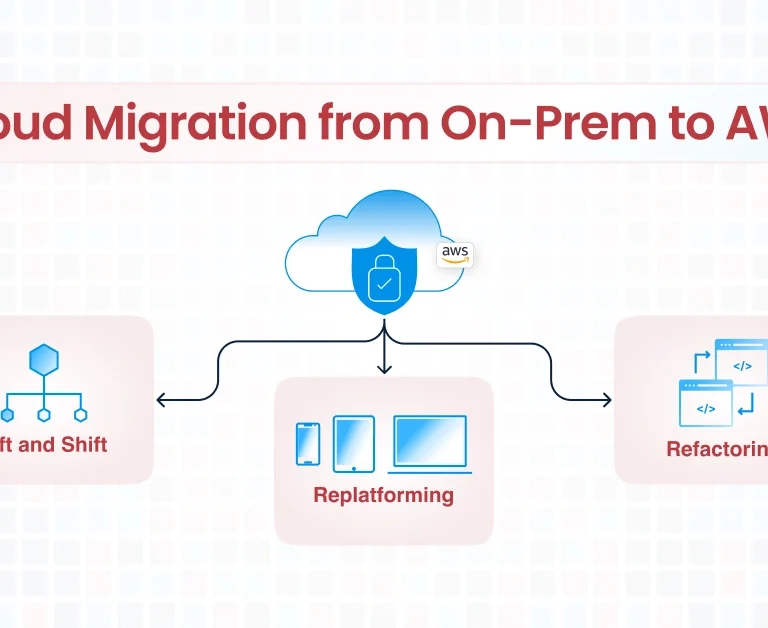

For businesses and developers focused on AWS, this change by HashiCorp presents an opportunity to evaluate AWS’s own Cloud Development Kit (CDK). The AWS CDK is an open-source software development framework for defining cloud infrastructure in code and provisioning it through AWS CloudFormation. It provides a high level of control and customization, specifically optimized for AWS services.

As a CIO or CTO selecting an Infrastructure as Code (IaC) tool for your organization, this licensing change may prompt reconsideration. With the importance of mitigating risk in tool selection, the appeal of open-source alternatives without the complexities of licensing issues becomes increasingly clear. This shift could significantly influence the decision towards truly open-source tools like AWS CDK over Terraform for streamlined, hassle-free IaC management especially if you are already using AWS as your cloud provider.

Why CloudKitect Leverages AWS CDK

CloudKitect, a provider of cloud solutions, has strategically chosen to build its products using AWS CDK. This decision is rooted in several key advantages:

- Optimization for AWS: AWS CDK is inherently designed for AWS cloud services, ensuring seamless integration and optimization. This means that for companies heavily invested in the AWS ecosystem, CDK provides a more streamlined and efficient way to manage cloud resources.

- Control and Customization: AWS CDK offers a high degree of control, allowing developers to define their cloud resources in familiar programming languages. This aligns well with CloudKitect’s commitment to providing customizable solutions that meet the specific needs of their clients.

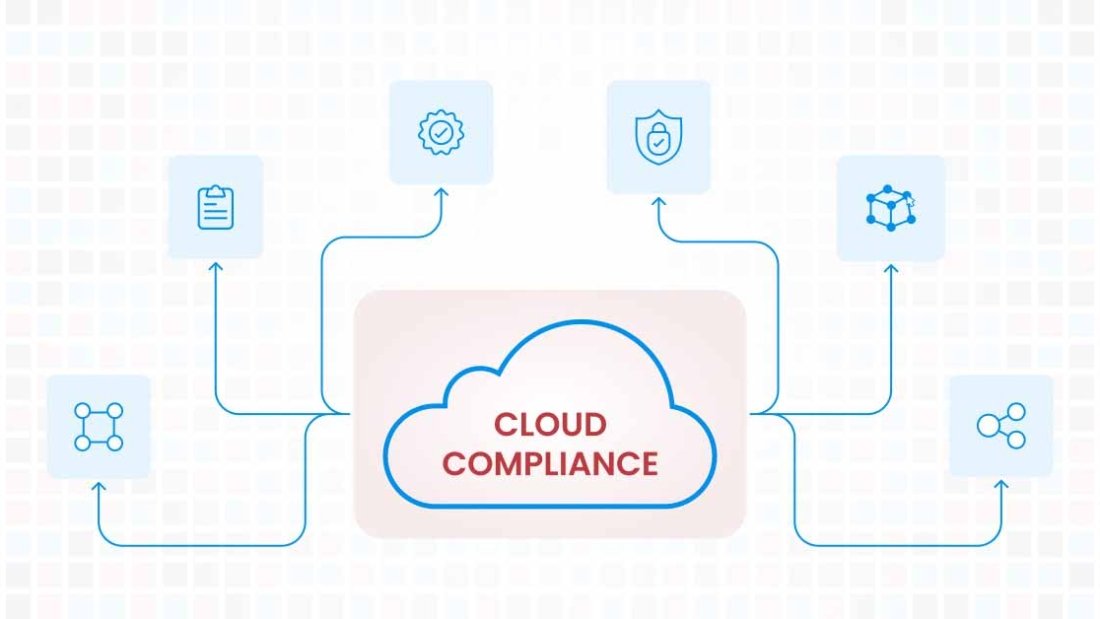

- Enhanced Security and Compliance: Given AWS’s stringent security protocols, using CDK infrastructures can be easily secured and tested to be compliant with various security standards, a critical consideration for enterprises.

- Future-Proofing: By aligning closely with AWS’s own tools, CloudKitect positions itself to quickly adapt to future AWS innovations and updates, ensuring its products remain at the cutting edge.

Conclusion

HashiCorp’s shift in Terraform’s licensing model is a pivotal moment that prompts a reassessment of the tools used for cloud infrastructure management. For AWS-centric organizations and developers, AWS CDK emerges as a robust alternative, offering specific advantages in terms of optimization, customization, and security. CloudKitect’s adoption of AWS CDK for its product development is a testament to the kit’s capabilities and alignment with future cloud infrastructure trends. This strategic move may well signal a broader industry shift towards more specialized, provider-centric infrastructure as code tools. If you would like us to evaluate your existing infrastructure, schedule time with one of our AWS cloud experts today.

Talk to Our Cloud/AI Experts

Search Blog

About us

CloudKitect revolutionizes the way technology startups adopt cloud computing by providing innovative, secure, and cost-effective turnkey solution that fast-tracks the digital transformation. CloudKitect offers Cloud Architect as a Service.