Introduction

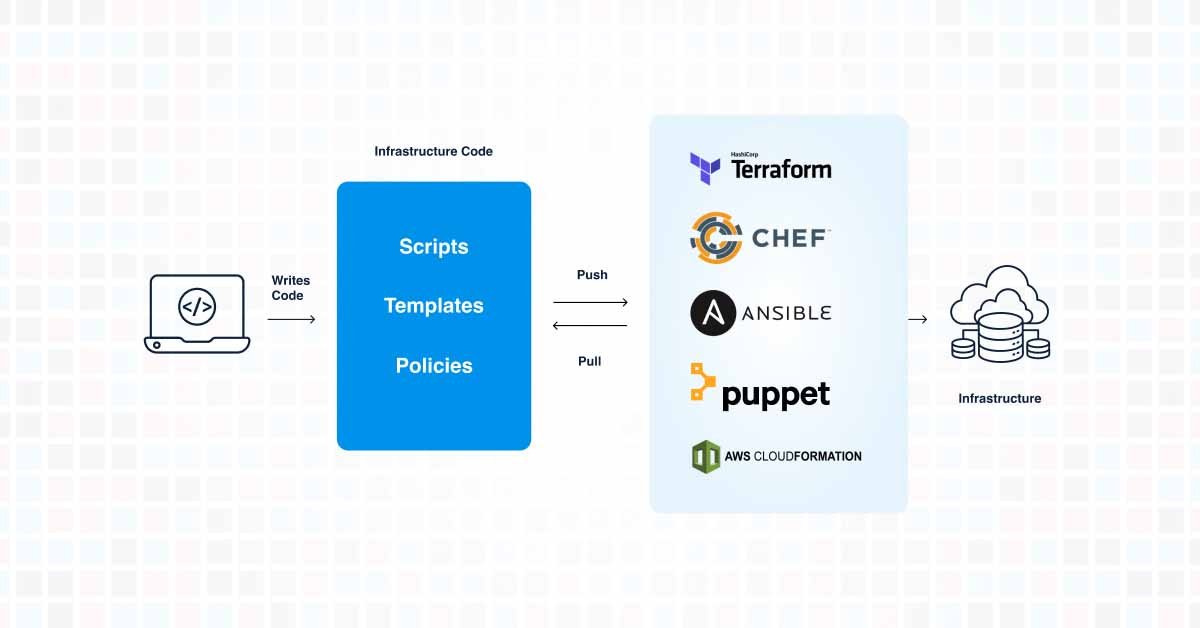

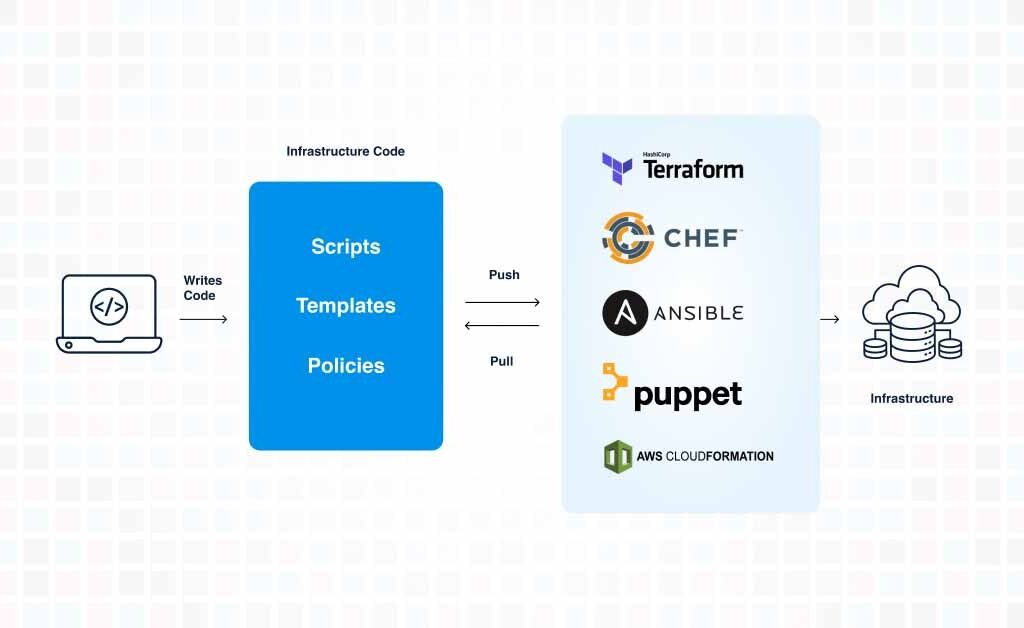

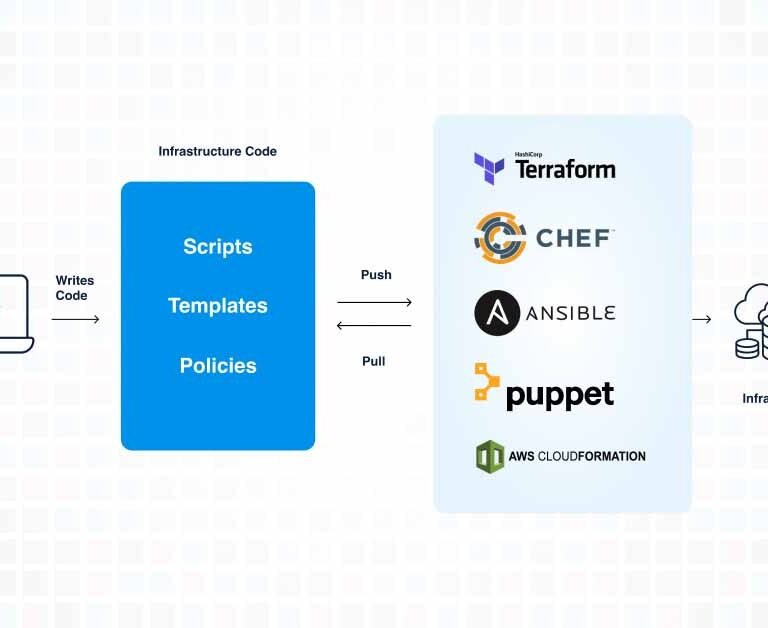

In the world of DevOps and cloud computing, Infrastructure as Code (IaC) has emerged as a pivotal practice, fundamentally transforming how we manage and provision our IT infrastructure. IaC enables teams to automate the provisioning of infrastructure through code, rather than through manual processes. However, for it to be truly effective, it’s crucial to treat infrastructure as code in the same way we treat software development. Here’s how:

1. Choosing a Framework that Supports SDLC

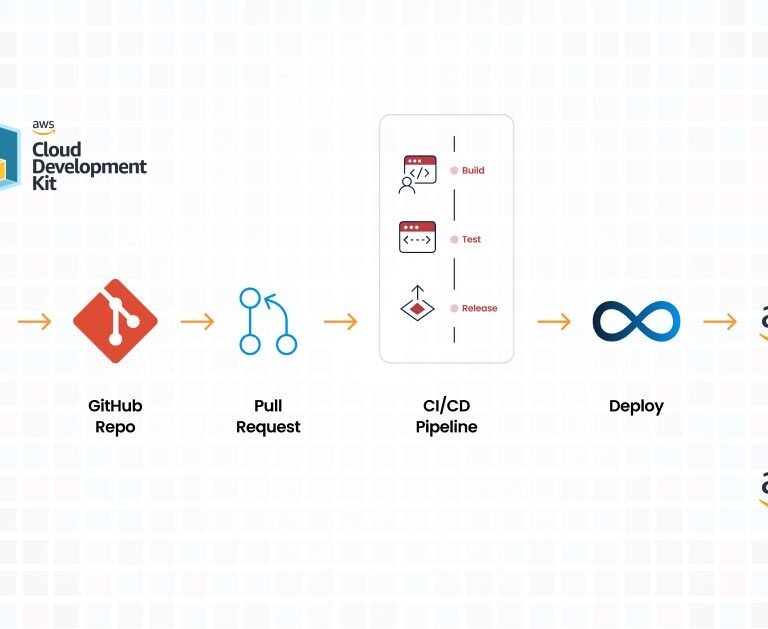

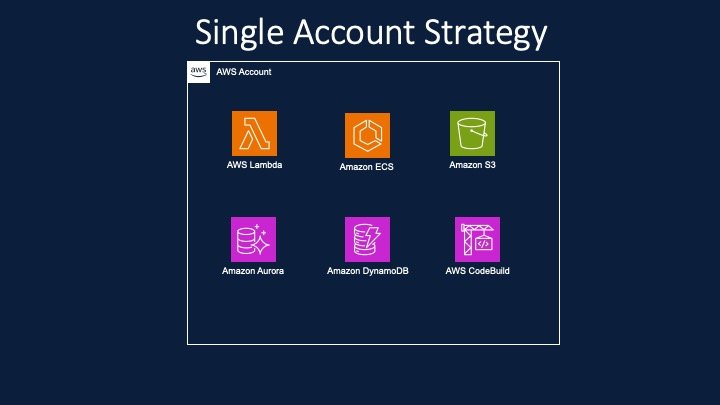

The Software Development Life Cycle (SDLC) is a well-established process in software development, comprising phases like planning, development, testing, deployment, and maintenance. To effectively implement IaC, it’s essential to choose a framework that aligns with these SDLC stages. Tools like AWS Cloud Development Kit – CDK not only support automation but also fit seamlessly into different phases of the SDLC, ensuring that the infrastructure development process is as robust and error-free as the software development process.

2. Following the SDLC Process for Developing Infrastructure

Treating infrastructure as code means applying the same rigor of the SDLC process that is used for application development. This involves:

- Planning: Defining requirements and scope for the infrastructure setup.

- Development: Writing IaC scripts to define the required infrastructure.

- Testing: Writing unit test and functional tests to validate the infrastructure code.

- Deployment: Using automated tools to deploy infrastructure changes.

- Maintenance: Regularly updating and maintaining infrastructure scripts.

3. Integration with Version Control like GIT

Just as source code, infrastructure code must be version-controlled to track changes, maintain history, and facilitate collaboration. Integrating IaC with a version control system like Git allows teams to keep a record of all modifications, participate in code review practices, roll back to previous versions when necessary, and manage different environments (development, staging, production) more efficiently.

4. Following the Agile Process with Project Management Tools like JIRA

Implementing IaC within an agile framework enhances flexibility and responsiveness to changes. Using project management tools like JIRA allows teams to track progress, manage backlogs, and maintain a clear view of the development pipeline. It ensures that infrastructure development aligns with the agile principles of iterative development, regular feedback, and continuous improvement.

5. Using Git Branching Strategy and CI/CD Pipelines

A git branching strategy is crucial in maintaining a stable production environment while allowing for development and testing of new features. This strategy, coupled with Continuous Integration/Continuous Deployment (CI/CD) pipelines, ensures that infrastructure code can be deployed to production rapidly and reliably. CI/CD pipelines automate the testing and deployment process, reducing the chances of human error and ensuring that infrastructure changes are seamlessly integrated with the application deployments.

Conclusion

In conclusion, treating Infrastructure as Code with the same discipline as software development is not just a best practice; it’s a necessity in today’s fast-paced IT environment. By following the SDLC, integrating with version control, adhering to agile principles, and utilizing CI/CD pipelines, organizations can ensure that their infrastructure is as robust, scalable, and maintainable as their software applications. The result is a more agile, efficient, and reliable IT infrastructure, capable of supporting the dynamic needs of modern businesses.

Talk to Our Cloud/AI Experts

Search Blog

About us

CloudKitect revolutionizes the way technology startups adopt cloud computing by providing innovative, secure, and cost-effective turnkey solution that fast-tracks the digital transformation. CloudKitect offers Cloud Architect as a Service.

Related Resources

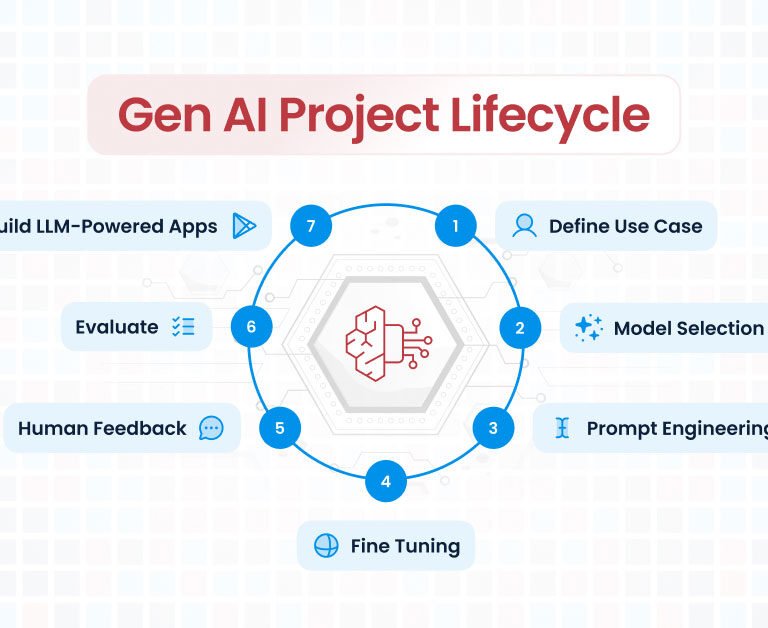

Generative AI Project Lifecycle: A Comprehensive Guide

Unlocking Data Insights: Chat with Your Data | PDF and Beyond